This a guest posting by Matt Heusser. Matt is the Managing Director of Excelon Development, with expertise in project management, development, writing, and systems improvement. And yes, he does software testing too.

Most of us are familiar with the development benefits of small batches. The company can release the most important features and gain value in weeks, days, or hours, instead of waiting to release the entire kitchen sink in six months. Even if we did not realize this, Agile Dogma says that small batches are better, so people are inclined to release more often.

Regression testing is probably the single biggest struggle for teams I see moving to something like Scrum; fitting regression testing into a two-week cycle is more than a bit of a challenge. Instead, teams often sprint along for two, three, four, five sprints, then have a “hardening sprint” or two (or three), that are essentially test/fix/retest sprints.

The idea here is to reduce time spent testing, compared to other activities. Yet teams that try this rarely see that benefit. Instead, they release software less often while the time for regression testing balloons. Of course it does.

Code Changes Create Uncertainty

Every time a programmer makes a change, they create a little bit of uncertainty. If the change is small, a “one point story” for example, and a software tester tests the story the same day, the uncertainty decreases to a certain degree. It is possible that the change had some unintended consequence somewhere else in the software.

These changes add up over time. Eventually, we have the regression-test, fix/retest cycles. The longer we wait between testing, the more uncertainty and more defects we will have, which means we need more testing with better coverage. Because more calendar time will have elapsed, tracking the defect to a change, and to the programmer who created that change, will be hard or impossible. The more time that has gone by, the further the programmer assigned to the fix will be from that piece of code. As a result, fixes are less likely to be correct and will require more debugging.

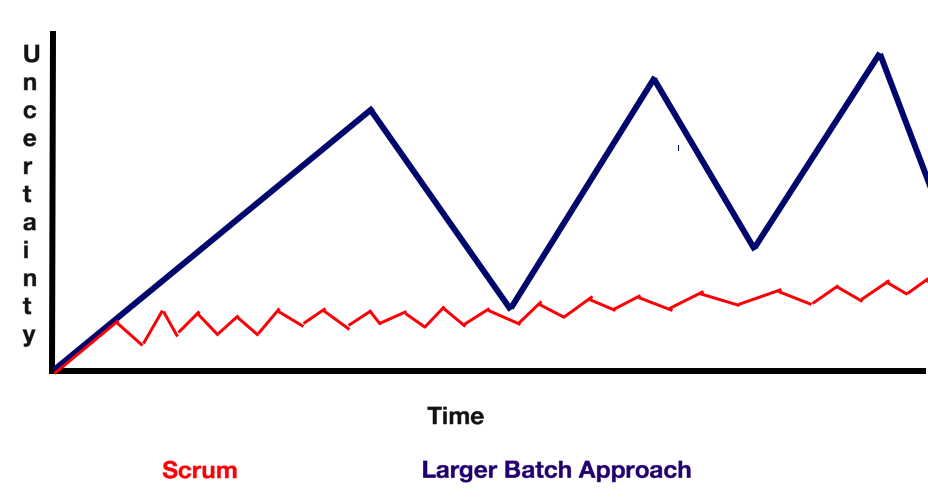

The graph below shows the problem, comparing a team that ships every two-week sprint with a team that ships at the end of each “project.” The lines that go up are programming, which increases uncertainty, while the lines that go down are the regression-test steps, which decrease it.

Note that uncertainty tends to build slowly over time. Under scrum, it is possible to spend an entire sprint on bug fixing, testing, and eliminating technical debt. In contrast, with a waterfall, at least in my experience, the drum beat of project delivery means we ship when the deadline hits, and immediately start working on the next project.

Take a look at the diagram above for a minute. Think about uncertainty. Notice: The longer the development phase, the more uncertainty, the more time we need for test/fix/retest cycles. This leads to Heusser’s rule of regression testing:

Shipping more frequently means less uncertainty, means less test/fix/retest cycles, means shorter regression test effort.

While we tend to understand this at the team level, when it comes to teams-of-teams, we often get into trouble. For example, the Scaled Agile Framework starts with an example of a Product Increment, or PI, typically structured as three or four development sprints followed by three hardening sprints.

Most people interpret “hardening” as test/fix/retest, but the real intent behind the term was for the team to integrate with each other’s work. So, while one team may have perfectly good-to-go software each release, they might rely on another component that does not exist yet, or is being changed. In that case, for a short period, I can understand hardening sprints as a transition concept. If the transition never ends and the software is not suitable for shipping per sprint, the cost of testing will go up while uncertainty will rise. Eventually, those hardening sprints will become testing sprints. The economy of testing less often will be proved false.

If it hurts, do more of it. If integration is expensive, do it more often. Here’s why:

Join 34,000 subscribers and receive carefully researched and popular article on software testing and QA. Top resources on becoming a better tester, learning new tools and building a team.

Release-Test Calculus

When Issac Newton wanted to find the area under a curve, he started off with approximating the area using boxes. Four smaller boxes would be more accurate than two, and eight more accurate than four, and so on. Eventually Newton realized that an infinite number of infinitely small boxes would be the most accurate.

If Heusser’s rule is true, it leads to the wacko idea that, on some projects, we can release so often that we have an infinite number of infinitely small regression-test cycles. Dividing infinity by infinity, we find the regression-test cycles go away.

Now, that isn’t every project, and most teams have a lot of work to do so they can get to that place, including reducing the defect-injection rate, isolating the components, having independent deploys, and the ability to find and rollback problems quickly. Nor does regression-testing go away, instead, it happens all the time, with a constantly updated list of emergent risks investigated by humans, combined with smart automated checking by the computer.

This is Heusser’s rule of regression-testing:

“Shipping more frequently means less uncertainty, means less test/fix/retest cycles, means shorter regression test effort.”

Put that on a t-shirt. More importantly, change behaviors to recognize that as reality.

Because it is.