This is a guest posting by Bob Reselman

I’m a big fan of ephemeral computing. It makes sense because the simple fact is that more companies want to maximize the expense of computing: Nobody wants to pay for computing power that goes unused.

Ephemeral computing lets companies pay only for the computing power they need, exactly when they need it — whether it’s at production-level deployment or earlier on during the various stages of development and throughout the required testing events.

Ephemeral computing is indeed a powerful approach to technology, but it can be challenging.

Get TestRail FREE for 30 days!

The Power of Ephemeral Computing

Ephemeral computing can be implemented in a variety of ways. Some companies go pure serverless, putting application and testing logic in serverless functions. Then they expose the functions via an API gateway or as a recipient of a message from a message queue.

While serverless computing is growing in popularity, it’s still cutting-edge for many companies. The more common technique is to dynamically provision a computing environment with virtual artifacts using an orchestration technology. This type of provisioning creates virtual machines (VMs) and containers when needed and destroys them after use.

This is particularly effective when doing performance testing. Many times resetting a test by simply redeploying the environment under test from scratch is a lot easier than having to roll back all databases or file systems throughout the deployment. Dynamic provisioning is fast and reliable.

There are a number of ways to dynamically provision a computing environment. You can use technologies such as Kubernetes and Helm Package Management to create and destroy environments using nothing more than a set of command-line instructions. But this is useful only for shops that have full support for Kubernetes.

Another way is for companies to take a more traditional approach and use a provisioning tool such as Ansible, Chef or Puppet.

Join 34,000 subscribers and receive carefully researched and popular article on software testing and QA. Top resources on becoming a better tester, learning new tools and building a team.

Understanding Pull vs. Push Provisioning

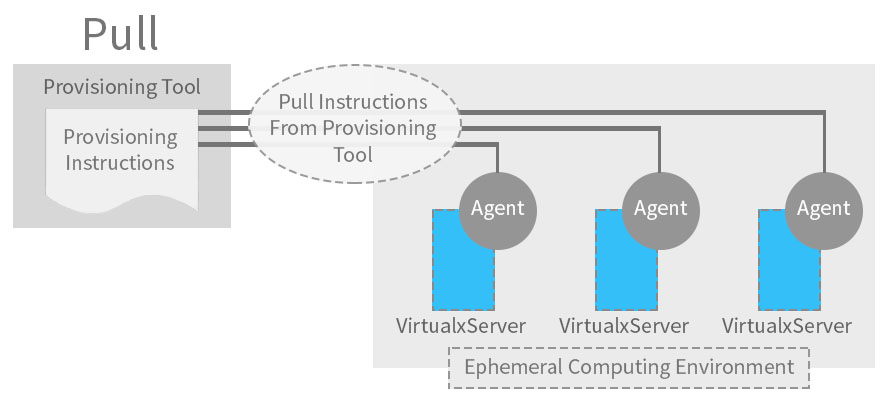

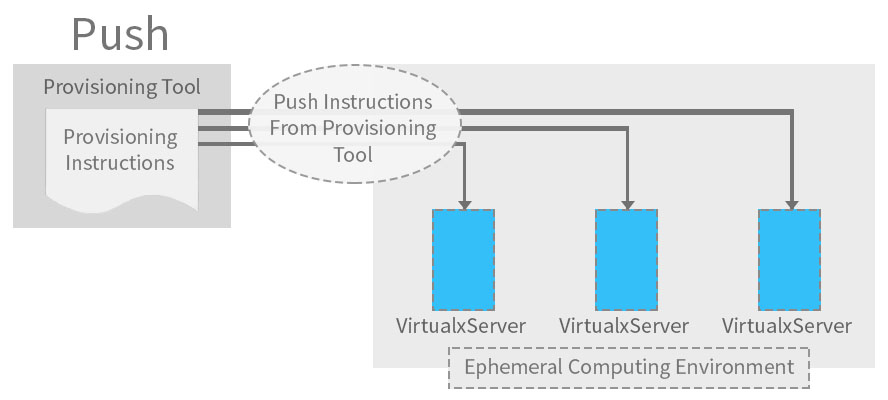

I like Ansible because it’s an agentless technology that pushes provisioning instructions directly to the given ephemeral environment. Puppet and Chef are “pull” technologies. (See figure 1 below.)

Figure 1: Pull provisioning requires an agent to get instructions from a server; push provisioning sends instructions directly to the ephemeral computing environment

Push provisioning differs from pull provisioning in a fundamental way: It executes installation and configuration commands directly against the target being provisioned. Pull provisioning requires an agent to be installed on the host systems. The agent looks to the provisioning server for instructions to follow and the artifacts to install, and then installation and configuration takes place directly on the machine that hosts the agent. Push vs. pull is an important distinction: Push requires no agent on the host; pull does.

Ansible Uses Push Provisioning

Ansible performs provisioning by executing a provisioning script written in yaml format. Ansible calls the script a playbook.

Listing 1 below shows a simple Ansible playbook file for installing NGINX on a Linux machine. After installing NGINX, the playbook copies the file index.html to the NGINX directory defined for the static web page and then starts up NGINX.

- name: Install nginxhosts: host.name.ip become: truetasks:- name: Add epel-release repoyum:name: epel-releasestate: present- name: Install nginxyum:name: nginxstate: present

- name: Insert Index Page

template:

src: index.html

dest: /usr/share/nginx/html/index.html

- name: Start nginx

service:

name: nginx

state: started

Listing 1: A simple Ansible playbook for getting NGINX installed and running on a local machine, from Ansible’s Getting Started webpage.

The name of the file in Listing 1 is simplenxingx.yml. Thus, to run the playbook, you type at the command line

ansible-playbook simplenxingx.yml

I’ve omitted covering many of the details necessary to get a full understanding of the playbook shown above. The Ansible Getting Started webpage provides an explanation of the basic concepts. The important thing to understand is that Ansible is executing commands against target machines directly. No target machine requires the presence of an agent. Pushing to a machine in the way Ansible does makes ephemeral provisioning a straightforward undertaking.

Ansible Makes Performance Testing Efficient

Using Ansible to provision machines under test as well as machines that will act as test controllers saves time and money.

Industrial-strength test environments that are meant to accommodate the load of millions of users can be expensive to emulate. Some environments under test might require hundreds of VMs or containers that represent thousands of interdependent microservices. The test controllers that need to exercise these types of environments can be as big and complex as the systems under test. Whether it’s a test controller or test target, each of these VMs costs money. When they’re not used, it’s money wasted.

The benefit Ansible brings to the table is the essential value proposition of ephemeral computing. Ansible allows you to quickly and reliably set up the environment you need to do the work. Once your testing objectives are met, you destroy the resources you created. You pay only for what you need, when you need it. It’s a value proposition that’s hard to ignore.

Putting It All Together

Ansible has been growing in popularity since its release in 2012. Today the technology supports more than 300 modules that enable software from a variety of manufacturers, including Windows, RabbitMQ, MongoDB and MySQL, to be deployed with little more effort than an entry in an Ansible playbook.

Using Ansible to dynamically provision not only environments under test, but also the test controllers themselves, makes ephemeral computing available to a large audience, from novice test practitioners to the experienced software developer in test. Ephemeral computing looms large in the future of the practice of modern systems testing. Ansible makes the technology accessible to all.

Article by Bob Reselman; nationally-known software developer, system architect, industry analyst and technical writer/journalist. Bob has written many books on computer programming and dozens of articles about topics related to software development technologies and techniques, as well as the culture of software development. Bob is a former Principal Consultant for Cap Gemini and Platform Architect for the computer manufacturer, Gateway. Bob lives in Los Angeles. In addition to his software development and testing activities, Bob is in the process of writing a book about the impact of automation on human employment. He lives in Los Angeles and can be reached on LinkedIn at www.linkedin.com/in/bobreselman.

Test Automation – Anywhere, Anytime