This is a guest posting by Bob Reselman

There are reasons Kubernetes adoption is growing in leaps and bounds. The technology provides a way to create and manage multi-instance, container-based applications that are designed to run at web scale on a large number of machines, either virtual or hard-metal. Kubernetes also provides a high degree of resilience and predictability that makes adopting the technology a compelling path forward for the modern enterprise.

A key benefit of Kubernetes is that it supports dynamic environment provisioning. Kubernetes allows system admins to add and remove containers and nodes running applications as needs increase or diminish. Nothing is written in stone with Kubernetes; the technology is highly flexible.

Yet, while Kubernetes provides many benefits, there are tradeoffs. Kubernetes puts containers and container orchestration at the forefront of systems design. This affects not only the way architects design applications but also the way QA professionals approach testing.

While it’s still true that applications running in a Kubernetes cluster will need to be tested from both outside and inside the cluster, Kubernetes requires a new approach to testing on the inside. In the past, tests running internally on a deployment could depend on a fairly stable environment. However, Kubernetes environments are dynamic. Any number of containers and nodes can be running at any time, so providing internal tests with configuration information can be tricky.

Putting configuration information in a static text file as part of the application deployment won’t do. First, putting sensitive information in a text file is a bad security practice. Second, even if using text files were acceptable, the information in the file can become invalid at any time. Remember, Kubernetes is a dynamic environment. Configurations requirements can change at the drop of a dime. This is true for configuration information that is general to the application as well as for information that is particular to a given test.

We need a secure way to provide configuration information on a “just-in-time” basis. This is where Kubernetes Secrets become useful.

Creating a Kubernetes Secret

A Secret is a Kubernetes resource that provides a way to store encrypted information within a cluster. DevOps personnel can inject Secrets into a cluster imperatively using command-line instructions, or they can create a Secret declaratively by creating a manifest file in YAML or JSON format that describes the Secret. Then once a manifest is created, a person or automation script injects the Secret into a particular cluster using the command:

kubectl create -f /path/to/manifest/a_secret_manifest.yaml

Where:

- kubectl is the executable for invoking commands against a Kubernetes cluster

- create is the subcommand that indicates creating a Kubernetes resource based on information in a manifest will be applied to a cluster

- -f is the option that indicates a file will be used to create the Kubernetes resource

- /path/to/manifest/a_secret_manifest.yaml is the particular manifest file, in YAML or JSON

Below is an example of a manifest file in YAML format. The Secret is assigned the name dbsecret and contains username and password credentials.

--- apiVersion: v1 kind: Secret metadata: name: dbsecret type: Opaque stringData: username: mysqladmin password: 4a10b2e1b155444!b1956c2ff6a274c)

As mentioned above, it’s possible to create a Secret imperatively at the command line. The following command creates a Secret at the command line using the – -from-literal option with a string literal that describes a key-value pair.

kubectl create secret generic mysecret --from-literal='A_SECRET=apples-taste-great'

This creates a Secret with the name mysecret. The Secret will have a key, A_SECRET, and that key will be assigned the value apples-taste-great.

You can also create a Secret with information stored in a text file using the option –from-file. The example below shows the command-line instruction for creating a Secret from a file.

kubectl create secret generic yoursecret --from-file=./secret_files/X_SECRET

The code above creates a Secret assigned the name yoursecret. The Secret will be a key-value pair in which the key will be X_SECRET and the value will be the contents of the text file. Thus, a file named X_SECRET that has the line:

Beware of security problems

will have a key-value pair:

X_SECRET=Beware of security problems

Admittedly, this pattern of creating a Secret’s key-value pair according to filename and file contents is a bit awkward. The preferred method in many IT shops for creating Secrets is to use manifest files. These manifest files need to be well protected and available to as few systems and system admins as possible.

Once a Secret is created, it’s then available to be used by other Kubernetes resources, provided the Secret and the resource share the same Kubernetes namespace. The example below shows the manifest for a Secret dedicated to the namespace accounting.

--- apiVersion: v1 kind: Secret metadata: name: filesystemsecret namespace: accounting type: Opaque stringData: username: testadmin password: ac36c881c5294edb85a7365d9fa1a534

Those learning how to use Secrets usually find it easiest to not declare a particular namespace for the Secret, instead assigning it to the default namespace. However, when it comes time to use namespaces in production, the Secret and those using the Secret should share the same Kubernetes namespace.

Using a Kubernetes Secret

There are a variety of ways to use a Kubernetes Secret. One is to embed a public/private TLS key pair into a Secret that gets used by a Kubernetes Ingress to allow access to an application at a particular URL of a domain running under Kubernetes. (An Ingress is a Kubernetes resource that defines public access to a Kubernetes service according to a particular URL.)

Below is an example of a manifest for a Kubernetes Ingress that stores a public/private TLS key pair in a Secret named example-com-tls.

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: nginx

spec:

tls:

- secretName: example-com-tls

hosts:

- example.com

rules:

- host: example.com

http:

paths:

- path: /

backend:

serviceName: nginx

servicePort: 80

Another use of a Kubernetes Secret is to define information that gets injected into an environment variable of a container running in a Kubernetes pod. (A pod is a Kubernetes resource that hosts one or more containers. Pods are a fundamental resource in the Kubernetes ecosystem.)

The example below shows the manifest for a pod that assigns values stored in a Secret to a container’s environment variables.

---

apiVersion: v1

kind: Pod

metadata:

name: simplepod

labels:

app: simplepod

spec:

containers:

- name: simplepod

image: reselbob/pinger

ports:

- name: app

containerPort: 3000

env:

- name: CURRENT_VERSION

value: V1

- name: MYSQL_USERNAME

valueFrom:

secretKeyRef:

key: username

name: dbsecret

- name: MYSQL_PASSWORD

valueFrom:

secretKeyRef:

key: password

name: dbsecret

Notice that the environment variables MYSQL_USERNAME and MYSQL_PASSWORD shown above get their values from a secret defined by the attribute secretKeyRef. The attribute identifies the Secret according to the Secret name dbsecret.

The example below shows a list of environment variables in the container created using the manifest shown above.

KUBERNETES_PORT=tcp://10.96.0.1:443 KUBERNETES_SERVICE_PORT=443 MYSQL_USERNAME=mysqladmin NODE_VERSION=8.9.4 YARN_VERSION=1.3.2 HOSTNAME=simplepod SHLVL=1 HOME=/root TERM=xterm KUBERNETES_PORT_443_TCP_ADDR=10.96.0.1 MYSQL_PASSWORD=4a10b2e1b155444!b1956c2ff6a274c) PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin KUBERNETES_PORT_443_TCP_PORT=443 KUBERNETES_PORT_443_TCP_PROTO=tcp KUBERNETES_SERVICE_PORT_HTTPS=443 KUBERNETES_PORT_443_TCP=tcp://10.96.0.1:443 PWD=/ KUBERNETES_SERVICE_HOST=10.96.0.1 CURRENT_VERSION=V1

Notice that the environment variables MYSQL_USERNAME and MYSQL_PASSWORD contain the values stored in the Secret named dbsecret, as defined in the manifest shown in the very first example.

The operational benefit is that the container is getting database access information from a source that exists encrypted within the cluster. The only security exposure would be if a malicious actor breaks directly into the cluster as an administrator and then into the container to read the data stored in the environment variables. Of course, the risk can be mitigated if the environment variables and the database access credentials are not named so apparently. Also, the credentials’ values can be made more secure by encrypting the access data in a way that can only be decrypted by intelligence known only to the container.

An added benefit is that as demand on the application increases and more containers need to be created to handle the additional load, these new containers will automatically get credentials from the same Secret used by containers already running. Using a Secret is a safe, effective way to share information within a cluster.

Using a Secret for Test Configuration

Sharing access information among pods becomes particularly useful for tests that are intended to be run from within a cluster. The strength of Kubernetes is that its clusters are well encapsulated. If you’re on the outside of the cluster, the only way to send a request to intelligence in the cluster is to use a predesignated access point, a URL. Once in, that request can be routed to any container in any node in the cluster. Thus, those on the outside never really know for sure what’s going on inside.

Again, the opaque yet dynamic nature of a Kubernetes cluster is a strength, but it can also be a drawback when it comes time to do fine-grain testing — for example, performance testing a database that is running inside the cluster.

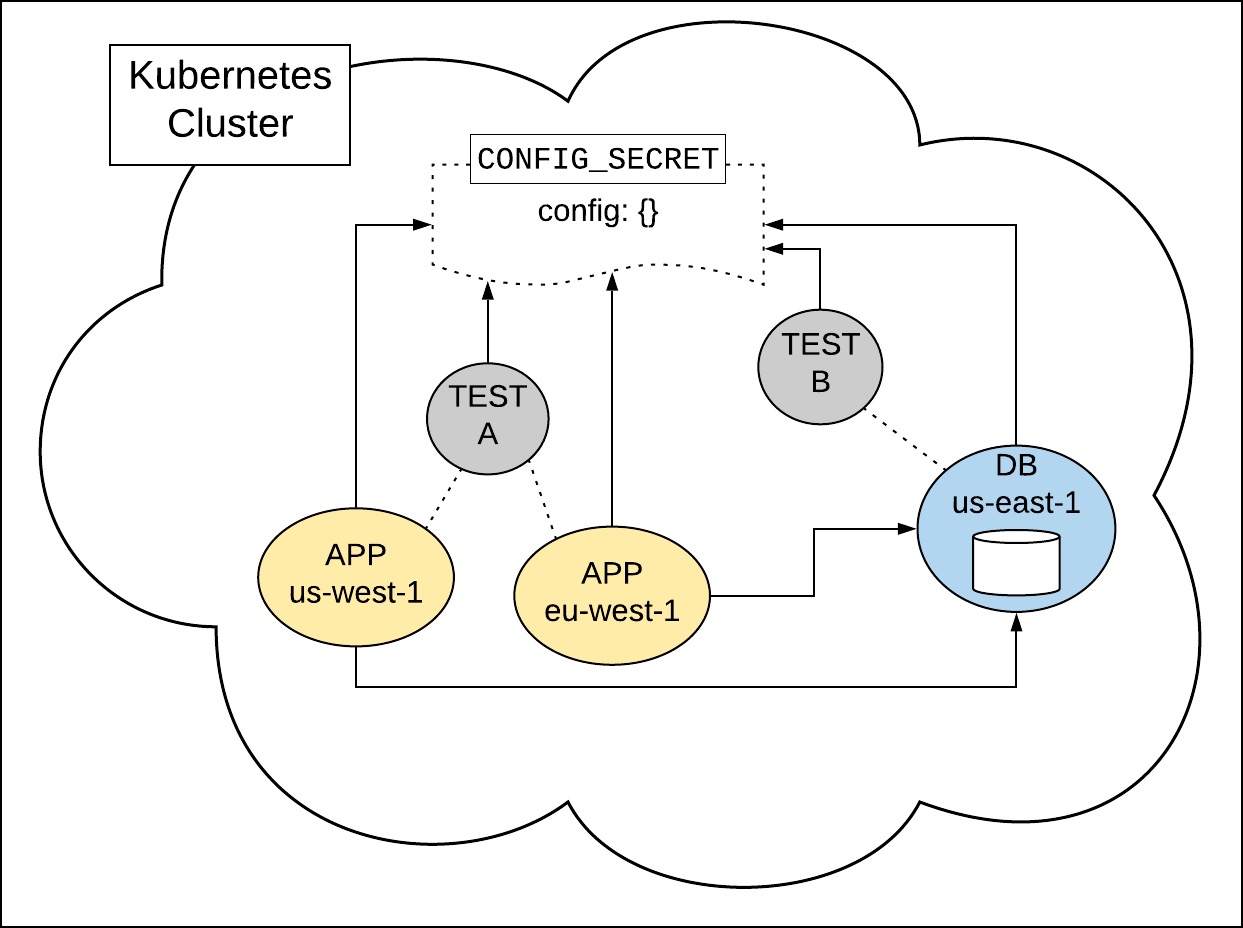

For instance, imagine a situation where one container is running on a node in the cluster located in the AWS region us-west-1, while another container running in parallel for the same application is thousands of miles away on a node in AWS eu-west-1. The database itself might be running on yet another node in AWS us-east-1. The performance implications may be profound depending on the location of the given container, so the only way to measure database access and performance accurately is to test from inside the cluster.

A way to run tests within the Kubernetes cluster is to containerize the test suites and then add the test containers to the cluster in which the application under test is running. The image below illustrates such a scenario.

The tests run internally in the cluster, and the results can be sent via HTTP to a test results collector outside the cluster for later analysis.

Having both applications and tests share the same configuration information via a common Secret makes deployment easier and information exchange secure. Of course, measures need to be taken when basic configuration modifications such as a password change occur. However, such updates are not difficult when Kubernetes’ rolling update feature is used. Under a rolling update, new Secrets and new containers are installed in the cluster as older ones are safely removed. If anything goes wrong, Kubernetes has the capability to roll the entire cluster back to its last good state.

Conclusion

Increasing adoption of Kubernetes technology offers benefits as well as challenges. As Kubernetes continues to proliferate over the IT landscape, we’re going to see more companies re-architect existing applications to take advantage of the value that Kubernetes provides.

Test practitioners will need to have a detailed understanding of Kubernetes in order to create tests and testing processes that ensure a high level of quality for the new and refactored applications that will be running under Kubernetes. Being able to use Kubernetes Secrets to implement effective test configuration is a good first step toward this goal.

Article by Bob Reselman; nationally-known software developer, system architect, industry analyst, and technical writer/journalist. Bob has written many books on computer programming and dozens of articles about topics related to software development technologies and techniques, as well as the culture of software development. Bob is a former Principal Consultant for Cap Gemini and Platform Architect for the computer manufacturer, Gateway. Bob lives in Los Angeles. In addition to his software development and testing activities, Bob is in the process of writing a book about the impact of automation on human employment. He lives in Los Angeles and can be reached on LinkedIn at www.linkedin.com/in/bobreselman.