The words “confidentiality” and “privacy” are often confused or perceived to be equivalent terms, but they actually represent completely different concepts. The precise definitions for these troublesome words often depend on context. They also can have very specific meanings in certain fields, such as when used in the medical and legal domains.

Confidentiality and privacy each affect the data that you provide and that is collected about you every day. To state the difference between confidentiality and privacy most simply, confidentiality is about the data, and privacy is about the individual. This article will focus on what these two terms mean in the world of information security.

Confidentiality

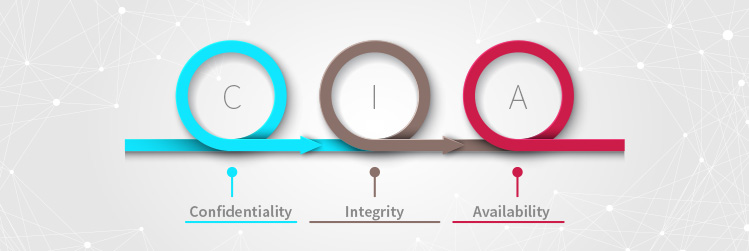

The three traditional tenets of information security, often called the CIA triad, are:

- Confidentiality: the assurance that only authorized parties can access data

- Integrity: the assurance that only authorized parties can modify data

- Availability: the assurance that data will be accessible by authorized parties on demand

A secure environment must support and provide for all three tenets.

Security professionals most often ensure confidentiality by using access controls and encryption. Access controls work well in controlled environments, but when data has to be sent across the internet, there is no trusted authority. That’s where encryption comes in. Encrypted data can only be accessed by individuals who possess the key to decrypt the data. Confidentiality is focused on preventing access of data by unauthorized parties.

Of course, I’m simplifying things here. Lots of new types of encryption are being explored that don’t rely solely, or sometimes even at all, on keys. Some of these interesting developments include functional encryption and the emerging domain of quantum cryptography.

Privacy

Privacy is all about the individual. It can basically be defined as the ability of an individual to be forgotten, or at least free from public attention. And in this sense, an individual doesn’t have to be a person — it can be an organization or any distinct entity.

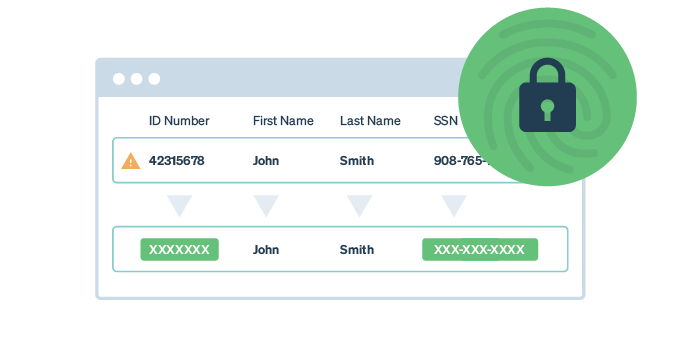

Guaranteeing privacy means guaranteeing that data cannot uniquely identify any individual. Protecting privacy is much harder than protecting confidentiality. Most organizations have processes to identify sensitive data they want to protect with confidentiality controls. For instance, military organizations have spent large amounts of resources protecting information. That’s why information that is classified is treated differently from “regular” information. The whole idea of classifying and granting users specific clearances is a type of access control called Mandatory Access Control (MAC).

Protecting privacy means that we have to look at the meaning of the data, not just the content of the data itself. Just having good confidentiality controls in place doesn’t guarantee privacy at all. For example, early attempts at privacy were based on the assumption that simply anonymizing obvious identifiers was enough, but several famous “fails” showed that even anonymized data can be very helpful in identifying individuals.

Join 34,000 subscribers and receive carefully researched and popular article on software testing and QA. Top resources on becoming a better tester, learning new tools and building a team.

Examples of Privacy “Fails”

There are two classic examples of good intentions that failed to protect the privacy of individuals. Privacy researchers found weaknesses in privacy controls and demonstrated how anonymization didn’t protect privacy at all.

Massachusetts Group Insurance Commission Data Leak

In 1997, the Massachusetts Group Insurance Commission (GIC) released “anonymized” data with details of every state employee’s hospital visits. The purpose of releasing this data was to promote research on improving health care quality and reducing cost.

An MIT computer science Ph.D. student at the time, Latanya Sweeney, used the data to conduct successful re-identification research. Sweeney purchased voting records for Cambridge, Mass., for $20. She was able to use that data and the GIC data to uniquely identify William Weld, the Massachusetts governor at the time.

Sweeney also published a widely referenced paper on privacy in 2000 in which she found that 87 percent of all Americans could likely be identified with only three pieces of information: ZIP code, gender and date of birth. She showed that privacy is such a difficult problem because so little data is actually needed to identify individuals.

AOL Search Data Leak

In August 2006, AOL released “cleansed” logs that contained 20 million search queries for 650,000 users. All data that directly identified users was anonymized, so AOL thought there was no way to associate users with the queries they submitted.

AOL was in a heated competition to gain market share and wanted to make their search engine algorithms more accurate, so they decided to release sample data and challenge researchers to compete for prizes to create a better algorithm.

Investigative reporters with The New York Times also looked at the data and wondered what they could learn from it. After analyzing the search queries, they found that user 4417749 searched for some interesting things, including:

- “landscapers in Lilburn, Ga”

- Several people with the last name Arnold

- “homes sold in shadow lake subdivision gwinnett county georgia”

The reporters used these searches, along with others from the same user, to determine that user 4417749 was actually Thelma Arnold, who lived in Georgia. They contacted her and she confirmed that the queries were hers.

The Future of Online Security

The lesson here is that well-intentioned actions to protect privacy are often not enough. The only way to protect privacy is for organizations that collect and handle personal data to do so responsibly. But that’s easier said than done.

Because private data is anything that can be used to uniquely identify an individual, it has great value to businesses. Private data allows organizations of all types to target their marketing efforts, and because of this inherent value of such data, many organizations have been collecting private data for years.

This practice of collecting, analyzing and even re-selling private data has gotten the attention of legislators. Many governing bodies are finally incorporating privacy concerns into regulation and legislation. Perhaps the most extensive effort to date is the European Union’s General Data Protection Regulation (GDPR). The GDPR goes way beyond any previous legislation to protect individual online privacy. Among many other things, it requires that all EU citizens be given clear notification of what personal data is being collected and stored, how it will be managed, and how it will be used. The GDPR also requires that users be able to request that all their private data be deleted upon demand.

We haven’t seen how much of an overall disruption the GDPR will be yet, but it has put online data privacy in the global spotlight. This groundbreaking regulation will make some things difficult for online business, but it is a great step forward for consumers.

As we see online activity and data collection maturing, it is important for all users to pay attention to the data you’re giving away. Pay attention and choose wisely. Your privacy is valuable and worth protecting. Read more posts on privacy on our blog.